Supervised speech separation based on deep learning: An overview. Journal of Machine Learning Research, 11, 3371–3408. Stacked denoising autoencoders: Learning useful representations in a deep network with a local denoising criterion. Vincent, P., Larochelle, H., Lajoie, I., Bengio, Y., & Manzagol, P. In Proceedings of the International Conference on Machine Learning, 1096–1103.

Extracting and composing robust features with denoising autoencoders. Vincent, P., Larochelle, H., Bengio, Y., & Manzagol, P. International Journal on Emerging Technologies, 1(1), 19–22. MFCC and its applications in speaker recognition. International Journal of Computational Intelligence Research, 13(10), 2449–2452. Speech/music classification using MFCC and KNN. IEEE/ACM Transactions on Audio, Speech, and Language Processing, 28, 380–390. Learning complex spectral mapping with gated convolutional recurrent networks for monaural speech enhancement. IEEE Transactions on Audio, Speech, and Language Processing, 19(7), 2125–2136.

An algorithm for intelligibility prediction of time-frequency weighted noisy speech. In Proceedings of the hands-free speech communications and microphone arrays conference, 136–140. Multiple-target deep learning for LSTM-RNN based speech enhancement. Perception optimized deep denoising autoencoders for speech enhancement. Proceedings of the IEEE International Conference on Acoustics, Speech, and Signal Processing, 2, 749–752. Perceptual evaluation of speech quality (PESQ)-a new method for speech quality assessment of telephone networks and codecs. In Proceedings of the 24th ACM International Conference on Multimedia, 670–674 Spectral and cepstral audio noise reduction techniques in speech emotion recognition. Pohjalainen, J., Ringeval, F., Zhang, Z., & Schuller, B. In Proceedings of INTERSPEECH, 3642–3646. SEGAN: Speech enhancement generative adversarial network. IEEE/ACM Transactions on Audio, Speech and Language Processing, 27(7), 1179–1188. A new framework for CNN-based speech enhancement in the time domain. CoRR: Audio Denoising with Deep Network Priors. IEEE/ACM Transactions on Audio, Speech and Language Processing, 26(9), 1570–1584. End-to-end waveform utterance enhancement for direct evaluation metrics optimization by fully convolutional neural networks. A fuzzy-wavelet denoising technique with applications to noise reduction in audio signals. IEEE Transactions on Information Forensics and Security, 12, 1979–1987.ĭavoudabadi, M. Speaker identification using discriminative features and sparse representation. In Proceedings of the 16th Annual Conference of the International Speech Communication Association, 3274–3278.Ĭhin, Y. Speech enhancement and recognition using multi-task learning of long short term memory recurrent neural networks. Lecture Notes in Computer Science, 8834, 535–542.Ĭhen, Z., Watanabe, S., Erdogan, H., & Hershey, J. Adaptive noise schedule for denoising autoencoder. Robust principal component analysis? Journal of the ACM, 58(3), 11:01-11:37.Ĭhandra, B., & Sharma, R. In Proceedings of the IEEE international conference on power, instrumentation, control and computing, 1–6.Ĭandes, E. An improved method of audio denoising based on wavelet transform. Impulse noise reduction in audio signal through multi-stage technique.

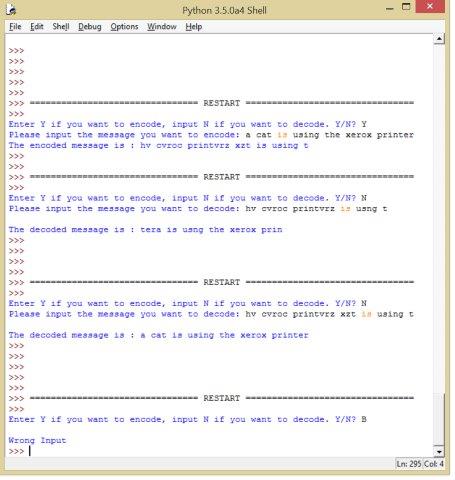

In Proceedings of the IEEE international conference on engineering and technology. Understanding of a convolutional neural network. From the cosine similarity, it has been proved that MLCNN provides high security level which can be used for many secure applications.Īlbawi, S., Mohammed, T. Form the comparisons it has been observed that the proposed MLCNN model outperforms other models. The proposed MLCNN model has been compared with the reported models. The performance of MLCNN has been evaluated using short-time objective intelligibility, perceptual evaluation of speech quality and Cosine similarities. From the validation it has been found that the proposed MLCNN model provides an accuracy of 93.25%.

The proposed method has been verified and validated MNIST database. The proposed MLCNN models has been trained and tested as 80:20 and 70:30 ratios from the available database. The proposed MLCNN takes the input as MFCC with different frames from the noise contaminated audio signal for training and testing. In this research article, a multi-layered convolutional neural network (MLCNN) based auto-CODEC for audio signal enhancement which is utilizing the Mel-frequency cepstral coefficients (MFCC) has been proposed.